Our modern faith in progress embodies a rich harvest of ironies, but one of the richest unfolds from the way it redefines such concepts as improvement and advancement.

To most people nowadays, the way things are done today is by definition more advanced, and therefore better, than the way things were done at any point in the past.

This curious way of thinking, which is all but universal in the industrial world among people who haven’t though its implications, starts from the equally widespread belief that all of human history is a straight line that leads to us. It implies in turn that the only way into the future that counts is the one that involves doing even more of what we’re already doing right now.

It’s easy to see why this sort of self-congratulatory thinking is popular, but just now it may also be fatal. The entire industrial way of life is built on the ever accelerating use of nonrenewable resources – primarily but not only fossil fuels – and it therefore faces an imminent collision with the hard facts of geology, in the form of nonnegotiable limits to how much can be extracted from a finite planet before depletion outruns extraction. When that happens, ways of living that made economic sense in a world of cheap abundant resources are likely to become nonviable in a hurry, and beliefs that make those ways of living seem inevitable are just another obstacle in the way of the necessary transitions.

Agriculture, the foundation of human subsistence in nearly all of the world’s societies just now, offers a particularly sharp lesson in this regard. It’s extremely common for people to assume that today’s industrial agriculture is by definition more advanced, and thus better, than any of the alternatives. It’s certainly true that the industrial approach to agriculture – using fossil fuel-powered machines to replace human and animal labor, and fossil fuel-derived chemicals to replace natural nutrient cycles that rely on organic matter – outcompeted its rivals in the market economies of the twentieth century, when fossil fuels were so cheap that it made economic sense to use them in place of everything else. That age is ending, however, and the new economics of energy bid fair to drive a revolution in agriculture as sweeping as any we face.

What needs to be recognized here, though, is that in a crucial sense – the ecological sense – modern industrial agriculture is radically less advanced than most of the viable alternatives. To grasp the way this works, it’s necessary to go back to the concept of ecological succession, the theme of several earlier posts on this blog.

Succession, you’ll remember, is the process by which a vacant lot turns into a forest, or any other disturbed ecosystem returns to the complex long-term equilibrium found in a mature ecology. In the course of succession, the first simple communities of pioneer organisms give way to other communities in a largely predictable sequence, ending in a climax community that can maintain itself over centuries. The stages in the process – seres, in the language of ecology – vary sharply in the way they relate to resources, and the differences involved have crucial implications.

Organisms in earlier seres, to use more ecologists’ jargon, tend to be R-selected – that is, their strategy for living depends on controlling as many resources and producing as many offspring as fast as they possibly can, no matter how inefficient this turns out to be. This strategy gets them established in new areas as quickly as possible, but it makes them vulnerable to competition by more efficient organisms later on. Organisms in later seres tend to be K-selected – that is, their strategy for living depends on using resources as efficiently as possible, even when this makes them slow to spread and limits their ability to get into every possible niche. This means they tend to be elbowed out of the way by R-selected organisms early on, but their efficiency gives them the edge in the long term, allowing them to form stable communities.

The difference between earlier and later seres can be described in another way. Earlier seres tend toward what could be called an extractive model of nutrient use. In the dry country of central Oregon, for example, fireweed – a pioneer plant, and strongly R-selected – grows in the aftermath of forest fires, thriving on the abundant nutrients concentrated in wood ash, and on bare disturbed ground where it can monopolize soil nutrients. As it grows, though, it takes up the nutrient concentrations that allow it to thrive, and leaves behind soil with nutrients spread far more diffusely. Finally other plants better adapted to less concentrated nutrients replace it. Thus the fireweed becomes its own nemesis.

By contrast, later seres tend toward what could be called a recycling model of nutrient use. The climax community in those same central Oregon drylands is dominated by pines of several species, and in a mature pine forest, most nutrients are either in the living trees themselves or in the thick duff of fallen pine needles that covers the forest floor. The duff soaks up rainwater like a sponge, keeping the soil moist and preventing nutrient loss through runoff; as the duff rots, it releases nutrients into the soil where the pine roots can access them, and also encourages the growth of symbiotic soil fungi that improve the pine’s ability to access nutrients. Thus the pine creates and maintains conditions that foster its own survival.

Other seres in between the pioneer fireweed and the climax pine fall into the space between these two models. It’s very common across a wide range of ecosystems for the early seres in a process of succession to pass by very quickly, in a few years or less, while later seres take progressively longer, culminating in the immensely slow rate of change of a stable climax community. Like all ecological rules, this one has plenty of exceptions, but the pattern is much more common than not. What makes this even more interesting is that the same pattern also appears in something close to its classic form in the history of agriculture.

The first known systems of grain agriculture emerged in the Middle East sometime before 8000 BCE, in the aftermath of the drastic global warming that followed the end of the last ice age and caused massive ecological disruption throughout the temperate zone. These first farming systems were anything but sustainable, and early agricultural societies followed a steady rhythm of expansion and collapse most likely caused by bad farming practices that failed to return nutrients to the soil. It took millennia and plenty of hard experience to evolve the first farming systems that worked well over the long term, and millennia more to craft truly sustainable methods such as Asian wetland rice culture, which cycles nutrients back into the soil in the form of human and animal manure, and has proved itself over some 4000 years.

This process of agricultural evolution parallels succession down to the fine details. In effect, the first grain farming systems were the equivalents, in human ecology, of pioneer plant seres. Their extractive model of nutrient use guaranteed that over time, they would become their own nemesis and fail to thrive. Later, more sustainable methods correspond to later seres, with the handful of fully sustainable systems corresponding to climax communities with a recycling model of nutrient use and stability measured in millennia.

Factor in the emergence of industrial farming in the early twentieth century, though, and the sequence suddenly slams into reverse. Industrial farming follows an extreme case of the extractive model; the nutrients needed by crops come from fertilizers manufactured from natural gas, rock phosphate, and other nonrenewable resources, and the crops themselves are shipped off to distant markets, taking the nutrients with them. This one-way process maximizes profits in the short term, but it damages the soil, pollutes local ecosystems, and poisons water resources. In a world of accelerating resource depletion, such extravagant use of irreplaceable fossil fuels is also a recipe for failure.

Fortunately, as last week’s post showed, the replacement for this hopelessly unsustainable system – if you will, the next sere in the agricultural succession – is already in place and beginning to expand rapidly into the territory of conventional farming. Modeled closely on the sustainable farming practices of Asia by way of early 20th century researchers such as Albert Howard and F.H. King, organic farming moves decisively toward the recycling model by using organic matter and other renewable resources to replace chemical fertilizers, pesticides, and the like. In terms of the modern mythology of progress, this is a step backward, since it abandons chemicals and machines for compost, green manures, and biological pest controls; in terms of succession, it is a step forward, and the beginning of recovery from the great leap backward of industrial agriculture.

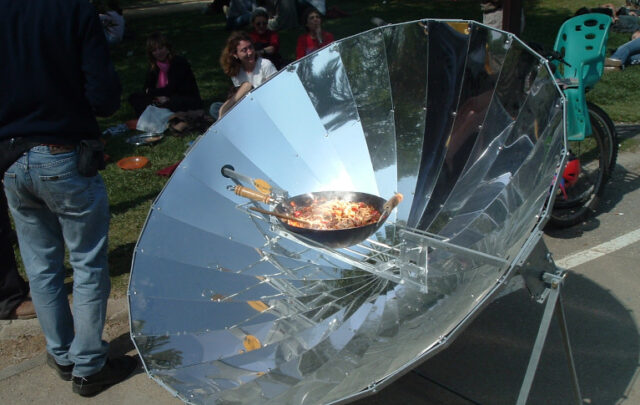

This same model may be worth examining closely when it comes time to deal with some of the other dysfunctional habits that became widespread in the industrial world during the fast-departing age of cheap abundant fossil fuel energy. In any field you care to name, sustainability is about closing the circle, replacing wasteful extractive models of resource use with recycling models that enable resource use to continue without depletion over the long term. It’s a fair bet that in the ecotechnic societies of the future – the climax communities of human technic civilization – the flow of resources through the economy will follow circular paths indistinguishable from the ones that track nutrient flows through a healthy ecosystem. How one of the more necessary of those paths could be crafted will be the subject of next week’s post.